The Imagicle recipe.

Now, you’re probably thinking: “Ok, Chris, but which runtime should I pick?”

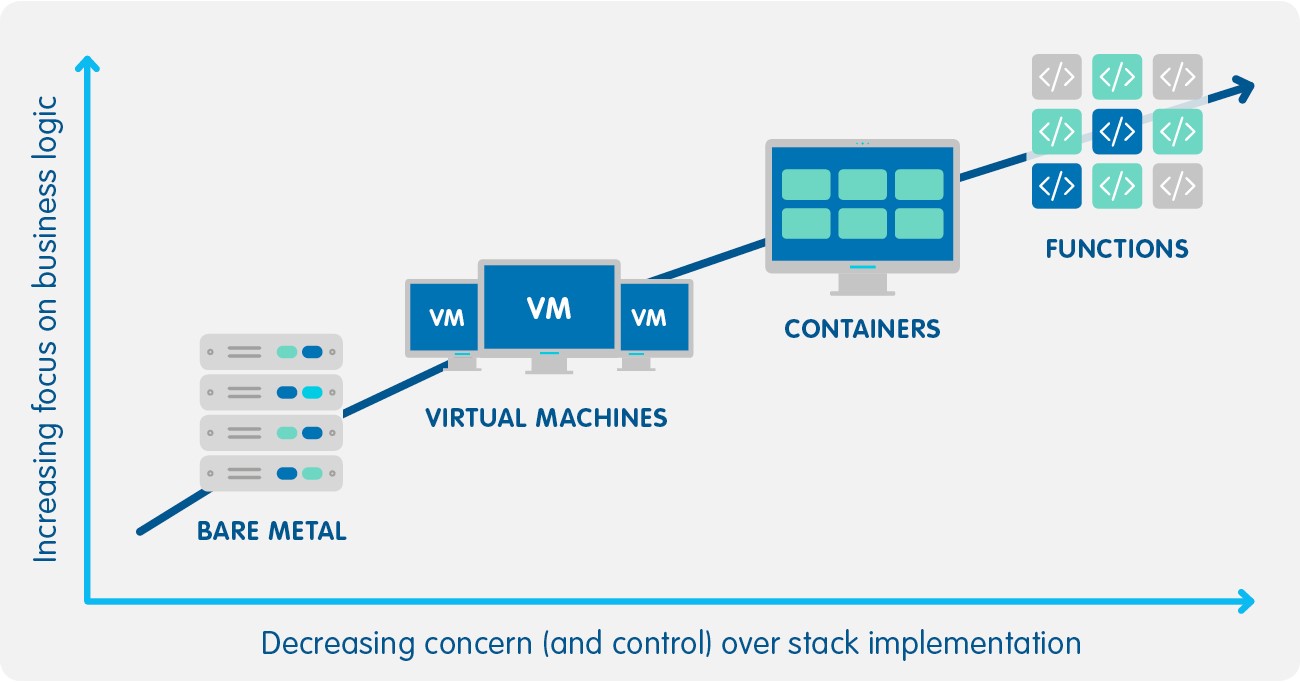

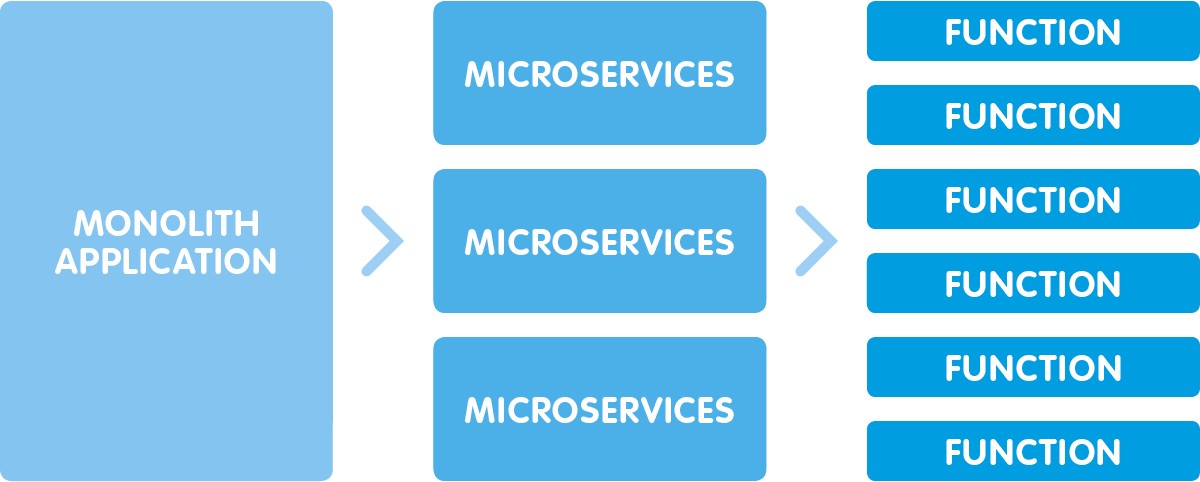

Today there are a lot of runtimes in the Serverless scenario.

For example, AWS, currently the most advanced Cloud Provider, supports Go, Python, NodeJs, Java, and .Net Core 2.

There is not a single right answer to this question, but perhaps it can help you to know how the Imagicle team approached this dilemma.

When we’ve begun developing the function, we asked ourselves:

- Which language will be more efficient and fast?

- What language will allow us to save money?

- Which language will the most suitable for our future in the Cloud?

Keep in mind that the function’s cost is calculated considering 3 different factors:

- memory;

- seconds of computing time;

- number of requests.

The first 2 points are very simple to manage, while the last one may be tricky, because, in our case, is in our clients’ hands.

After some technical , we have adopted GO, an open source programming language, because:

- it’s faster than other languages (less execution time = less Cloud cost);

- it is very simple to develop with;

- it produces only one distributable file (easy to deploy);

- in my opinion, it’s the language of the Cloud as much as python was the language of data science and ML, Scala was the language of Big Data, Java was the language of enterprise backend, PHP of the web, and so on.

To complete the recipe, we seasoned with a pinch of Serverless Framework to enable us to manage all functions lifecycle and all IAC (infrastructure as a code).  Watch

Watch

0 Comments