Loris Pozzobon - 14 August, 2019 - 11 ’ read

Why choosing emergent design for Agile software development.

"The best architectures, requirements, and designs emerge from self-organized teams". This is one of the Principles behind the Agile Manifesto I've briefly discussed in a previous post, talking about Sprint Planning. But it turns out to be a very useful point here today, as we'll look at what elements we can leverage to create shock-proof software architecture and how Imagicle self-organizing teams allow the design to emerge.

Building a bridge. The problems with the upfront design.

Emergent design.

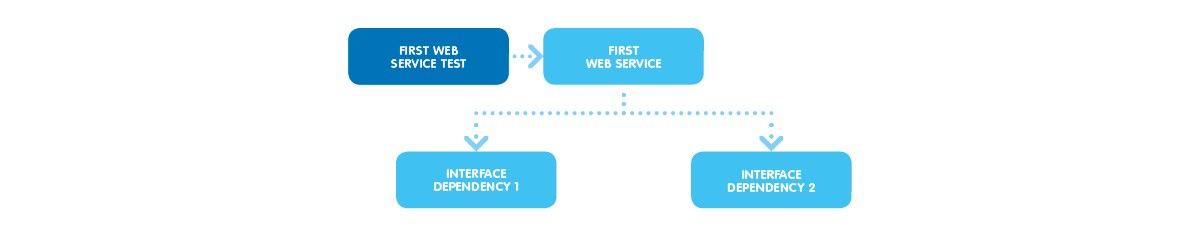

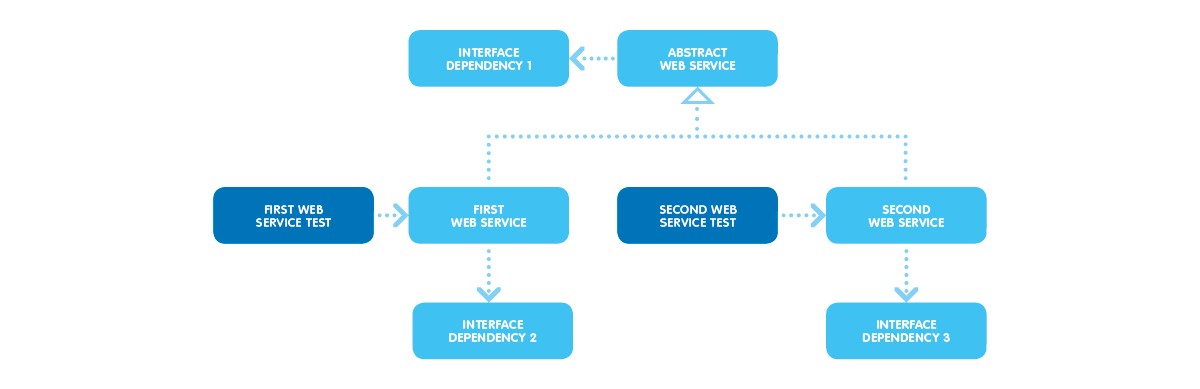

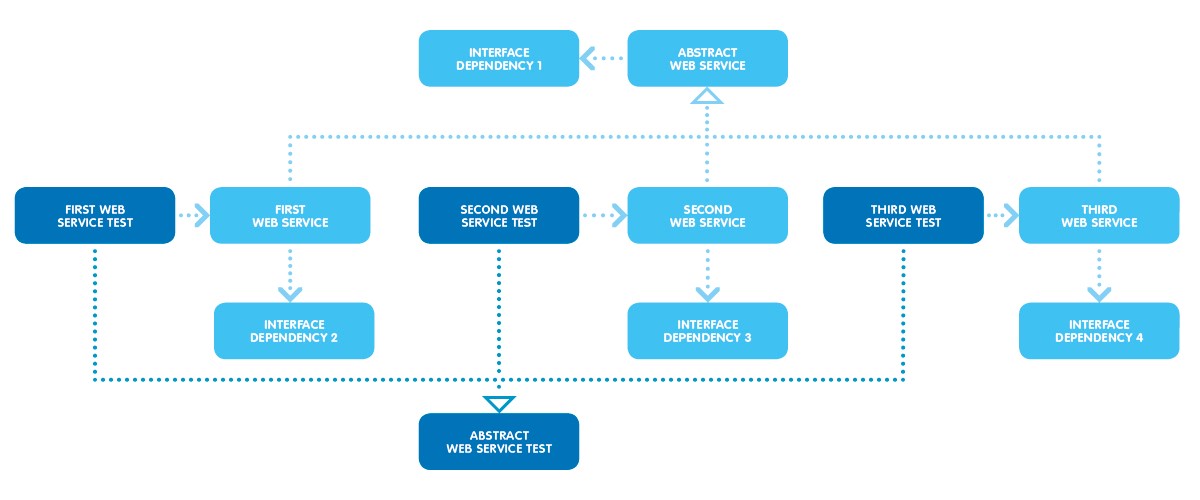

A real use case: the Imagicle UCX Suite Web Services.

And what about the tests?

But, as you can guess, it’s not all peaches and cream. Another trap is lurking.

Mind the trap.

Strange case of software design.

Conclusions.

#stayimagicle

You might also be interested in…

-

Products BlogCloud or Dedicated Cloud. What’s best?You're migrating to the Cloud and you need to choose between a public or private instance? This article will help you.

-

Release BlogWebex Single Sign-On for Imagicle apps. Simplified access, increased security.With Spring '22, all Imagicle apps will be accessible through Webex's Single Sign-On. Find out how this make your life easier.

-

Products BlogImagicle Attendant Console for Webex Calling: the operator console that was missing.Discover a new native integration. Attendant Console is fully compatible with all Cisco platforms!

Products Blog

Cloud or Dedicated Cloud. What’s best?

You're migrating to the Cloud and you need to choose between a public or private instance? This article will help you.

Release Blog

Webex Single Sign-On for Imagicle apps. Simplified access, increased security.

With Spring '22, all Imagicle apps will be accessible through Webex's Single Sign-On. Find out how this make your life easier.

Products Blog

Imagicle Attendant Console for Webex Calling: the operator console that was missing.

Discover a new native integration. Attendant Console is fully compatible with all Cisco platforms!

0 Comments